KVM architecture

TL;DR

Edera zones currently run on Xen. Starting in summer 2026, the same zone-based isolation will also run on KVM—preserving identical security guarantees while meeting teams where their infrastructure already is.

Why KVM

KVM represents a deliberate infrastructure choice for many organizations, backed by years of tooling, expertise, and certification work. Requiring a hypervisor swap to adopt strong container isolation is a non-starter for most of these teams.

Edera is extending its isolation model to KVM so that enterprises can adopt zone-based security without rebuilding their infrastructure.

Architectural comparison

Edera’s security model centers on zones—single-tenant execution environments with isolated kernels, address spaces, device namespaces, and independent lifecycles. These zones prevent the shared-kernel vulnerabilities that undermine container security.

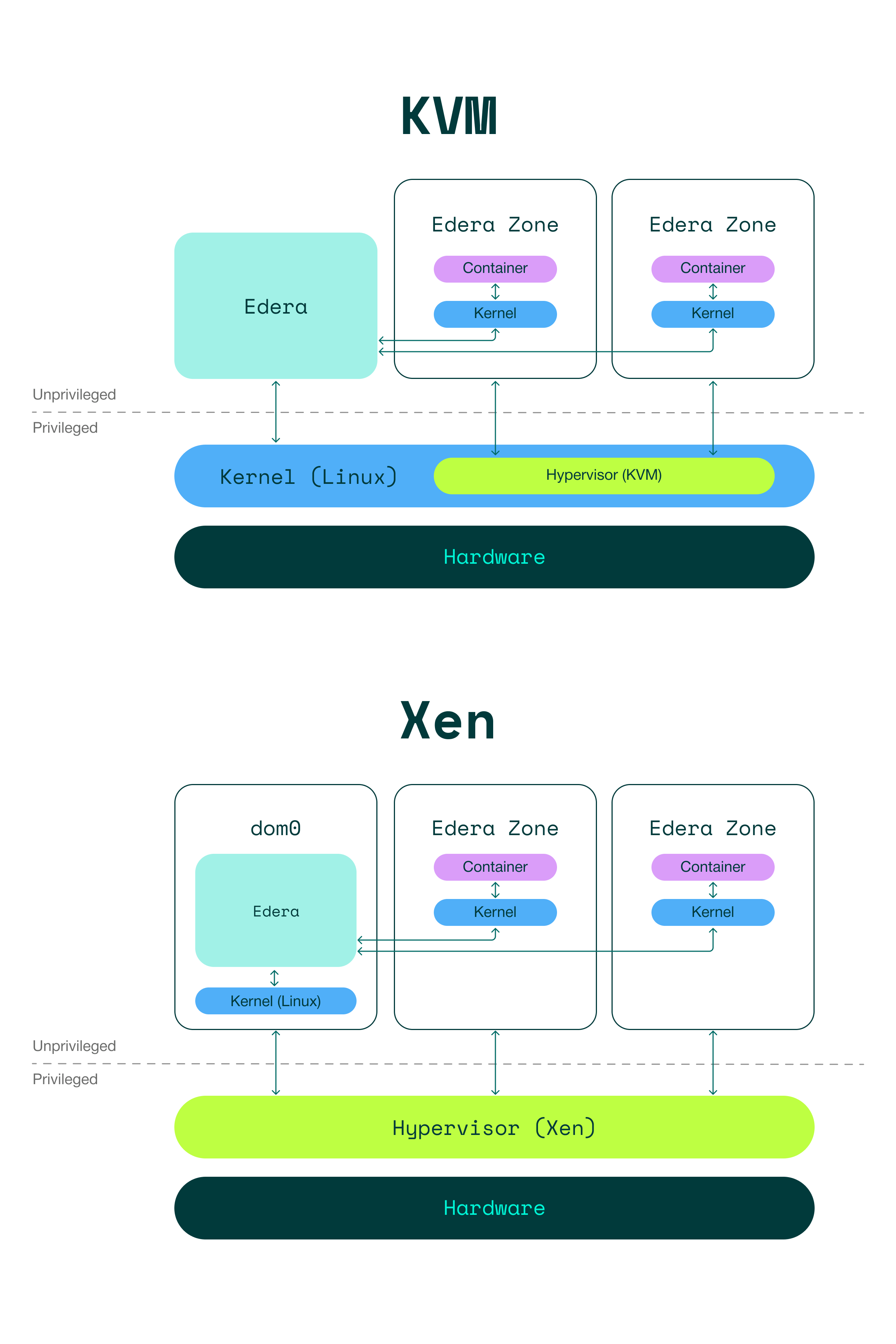

On both hypervisors, each zone runs its own Linux kernel and provides hardware-enforced isolation boundaries. The key difference is where enforcement responsibility lives.

Xen architecture

With Xen, the hypervisor sits beneath everything—it runs directly on hardware at the highest privilege level, below the host kernel.

- dom0 (the management domain) runs the host Linux kernel and Edera, with large portions of the Xen control stack rewritten in Rust for safety and maintainability

- Each Edera Zone runs as an unprivileged domU guest with its own dedicated kernel

- The hypervisor directly mediates all memory allocation, CPU scheduling, and interrupt routing

- Zone boundaries are enforced mechanically by the hypervisor—the host kernel never mediates them

This gives Xen clean, mechanically enforced memory and CPU boundaries. The hypervisor owns the enforcement logic, and the host kernel has no ability to violate zone isolation.

KVM architecture

With KVM, the hypervisor is a module inside the Linux kernel rather than a layer beneath it.

- The Edera management processes run unprivileged on the host system

- Each Edera Zone runs in an unprivileged VMM process that uses the KVM device, and contains its own dedicated kernel

- VMM processes are subject to scheduling by the host Linux kernel along with any other running process

- Memory for the zones is allocated from generic system memory, managed by the Linux kernel memory subsystem

Because KVM delegates CPU and memory management to the host kernel rather than to a dedicated hypervisor substrate, both are less deterministic and more easily influenced by other activities on the host system. This shifts significant responsibility to Edera’s runtime to enforce CPU and memory usage guardrails.

What changes under the hood

Memory management

On Xen, the hypervisor owns all physical memory and directly allocates it to domains, as instructed by dom0. Memory boundaries are absolute: Neither dom0 nor the zones can access memory that was not explicitly granted to them.

On KVM, memory for zones is allocated from generic system memory by the VMM processes. The host kernel’s memory subsystem manages allocation, reclaim, and overcommit across all processes. Edera’s runtime enforces memory usage guardrails to ensure zone isolation remains consistent.

Device lifecycle

On Xen, device assignment flows through the hypervisor’s grant table mechanism—zones get explicit, revocable access to specific devices.

On KVM, device assignment goes through the host kernel. Edera implements more defensive device lifecycle handling to ensure that device teardown, reassignment, and hot-plug operations maintain isolation guarantees equivalent to the Xen model.

CPU scheduling

On Xen, the hypervisor’s own scheduler allocates CPU time to dom0 and to zones directly. dom0 can modify the scheduling policies, but is not directly involved in scheduling.

On KVM, the host kernel manages its own kernel threads, VMMs and other userspace processes as regular threads. All such threads are scheduled by the host kernel. Edera works within this model while ensuring that scheduling behavior meets the same isolation and fairness expectations.

What stays the same

Despite the differences in how enforcement is implemented, the guarantees delivered to workloads are identical:

| Guarantee | Xen | KVM |

|---|---|---|

| Dedicated kernel per workload | Yes | Yes |

| Isolated memory and address spaces | Yes | Yes |

| Independent device namespaces | Yes | Yes |

| Independent zone lifecycles | Yes | Yes |

| Kubernetes RuntimeClass integration | Yes | Yes |

protect CLI compatibility | Yes | Yes |

| OCI image-based zone composition | Yes | Yes |

Applications require no changes to run on KVM-backed zones versus Xen-backed zones. Existing orchestration workflows, monitoring, and tooling work the same way.

When to use which

| Consideration | Xen | KVM |

|---|---|---|

| Existing infrastructure | Greenfield or Xen-based environments | Teams already running KVM |

| Enforcement model | Hypervisor-level, mechanically enforced | Host kernel + Edera runtime enforced |

| Hardware compatibility | Broad; supports PV and PVH, works in VMs without nested virtualization | Requires VT-x/AMD-V; nested virtualization needed in VMs (bare metal preferred) |